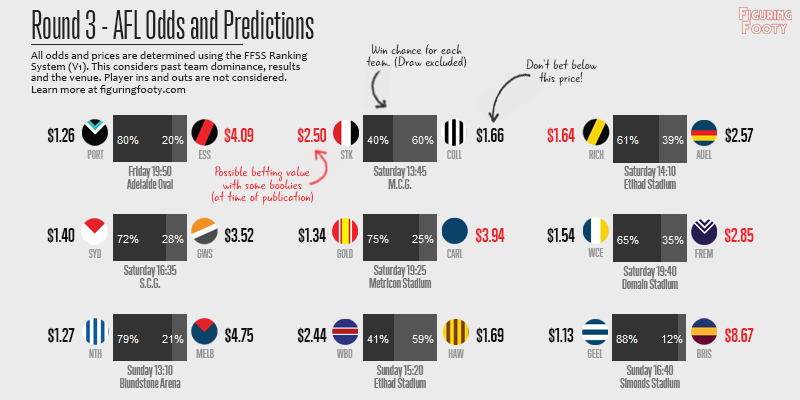

Only two weeks in and we can already see “the most even comp in years” narrative start to play out. There are 10 teams in…

Category: Ratings

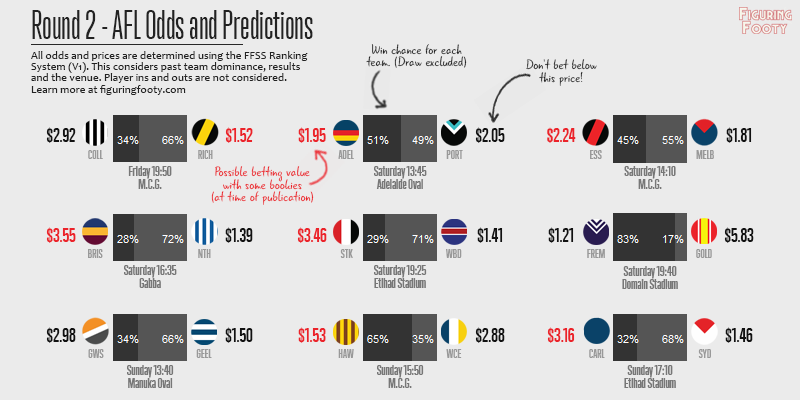

If Round 1 was a display of lucking tipping, then Round 2 came around to show us all that you just can’t count on lady…

2016 Round 2 – Tips and Predictions Home teams travel away

Posted in Ratings, Tipping, and Uncategorized

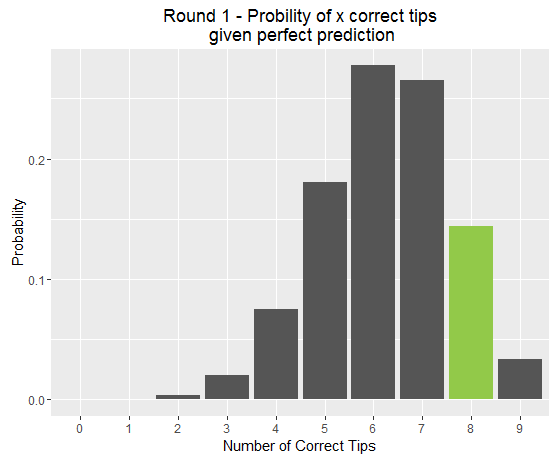

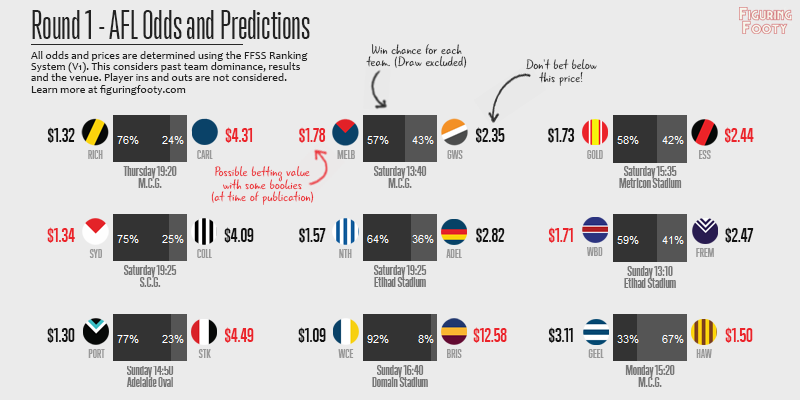

Week 1 proved to be a very successful round of tipping for the FFSS (Figuring Footy Scoring Shots) predictor. 8 out of 9 games were tipped correctly, and if you followed through on my recommended bets you would have netted yourself a tidy little profit of +13.02 Units (which would be about $130 if you started with a total bankroll of $1000). However, I also wrote this week about grounding our expectations in reality, and why last week’s results may have been a bit lucky.

This week poses us a fresh new challenge. As has been discussed a fair bit on Twitter, last round saw every home team get up. With the way the AFL draw is organised in the early rounds, this means that every single one of Round 1’s winners, goes into Round 2 as the away team. This means that each of them will face a handicapping by FFSS’s Home Ground Advantage calculator. HGA takes into account distance travelled by each team, ground experience by each team and the AFL designated home team (for games that are played at shared stadiums). This week, HGA varies between ~7 ratings points for Essendon at the G against Melbourne and ~110 ratings points for Freo at home to the Suns.1

My new computer predictor algorithm FFSS (Figuring Footy Scoring Shots) fared pretty admirably in her first week of tipping, nailing 8 out of 9 possible results. Only a bit of Dangerfield-inspired magic late on Monday afternoon prevented a perfect start to the season.

Anybody who has read this blog before will know that before the upcoming round I publish expected win probabilities for each match to be played. These probabilities are calculated by looking at the FFSS ratings of both teams and accounting for the venue. For example, I gave Sydney a 75% chance of beating Collingwood at home. They of course went on to thump them by 80 points. So then, if Sydney were so good, why didn’t the model rate them even higher and know ahead of time that they would dispatch Collingwood with ease?

After almost 6 months of mindnumbingly football-free weekends, the 2016 season is set to get started this Thursday night at the MCG. Having recently unveiled my new team ranking model, FFSS, I now have a system by which to predict probabilities for upcoming matches.

I have translated my calculated probabilities into inferred match odds and compared these to the current prices offered by some of the bigger bookmakers around the country, highlighting any major discrepancies. The reason I have done this is not to recommend or even advocate having a bet on any particular team (although I will certainly talk about “good bets” and “value”). But it is rather used as a way to explore the strengths and weaknesses of the model in greater detail.

Bookie prices can be seen as a general “public consensus” about what the true probabilities of a team winning a match are. When the model differs greatly from the public view it is good to know why. Is it seeing something else that the public are not valuing? Or, as you’ll see this week, is it missing entirely something that others are taking into account? If it’s the latter, then there is clear improvement that can be made, if the former, then I guess we’re on to a winner.

NOTE: All betting amounts will be discussed as unit bets assuming you have 100 units to play with as your full bankroll. For example if you have $10000 that you’re willing to lose over the year if worst comes to worst, then 1 unit is $100. A higher unit bet shows more confidence in the models assessment and the value to be made. If you are interested in betting as a serious money building exercise, first I would question whether you really want to cope with the stress of the virtually guaranteed big losses you will experience week to week. If the answer to that is yes, then read as much as you can on Bankroll Management and the fractional Kelly Criterion. You are very likely betting too much to be sustainable.

Developing an accurate and realistic rating system is often a primary for a sports analyst. Just about every organised sport competition in the world has it’s own implicit rating system in which we expect “good” teams to be rated higher than poorer teams. In AFL footy we call this the ladder. The problem with using a team’s position on the ladder to infer how well it plays is that the ladder is sorted primarily by wins. While winning lots of games is important (#analysis), how many games a team has won previously is not always the best indicator of how many they’ll win in the future. This is especially true of a competition like the AFL which uses an uneven draw. A team towards the top of the ladder that has yet to face any other difficult teams has obviously not proven itself to be a strong side.

A “true” rating system provides us with a wonderful descriptive and predictive tool. We can compare teams over time. (Just how does this year’s Hawthorn team hold up against Brisbane of the early 2000s?). We can map changes in team rating after notable player and administrative changes. (How important will Patrick Dangerfield’s move from Adelaide to Geelong be for both sides?). And perhaps most tantalising for some, we can calculate implied probabilities for upcoming matches and even seasons and make a profit betting against inefficiencies in sports-betting odds. (What is fair price for Hawthorn to make it 4 in a row next season?)

Given this motivation, I have created a few different types of rating systems that I have been testing out over the last season. Today I’ll introduce you to simplest of these, a basic Elo model which I have donned “SimpElo”1, and show you the impressive results that can be achieved with just a few basic principles.